Designing a compliance platform when every pattern breaks

Every user struggled with the corporate relationship mapping section. Not some users. Every user. That's not a domain complexity problem. That's a design problem.

Problem Statement

The service was a supplier information and compliance platform: a system that enables organisations and sole traders to register, declare key information about their business, and share that data with a procurement body for compliance purposes. Every supplier wishing to participate in major procurement contracts needed to complete it.

That meant the people using it were not casual users. They were business owners, finance directors, and legal representatives who knew their domain but were navigating an unfamiliar digital process under commercial pressure.

The design challenge was substantial: the service collected complex, interdependent business data across multiple journeys, constrained by policy requirements, built on an established design system with governance rules around component usage. Getting the design wrong didn’t just mean a bad experience; it meant suppliers unable to participate in contracts, and commercial processes failing.

Two types of constraint shaped every decision. The first was the design system: the service was built using an established component library with governance rules, not a blank canvas. Decisions about which patterns to use, and when to deviate from them, required justification. The second was policy: some design decisions couldn’t be changed regardless of user feedback; others could only be changed if the change could be shown to still meet the policy intent.

Your Role and Process

Before redesigning, we mapped the relationship types and modelled the decision logic. We needed to understand how the relationships actually worked before we could reflect that in the interface.

The approach was iterative: research rounds after each significant change. Every decision was documented. Nine design histories were published, each one capturing the problem, the options considered, the research finding, and the outcome. When we broke from convention—as we did in the Financial and Economic Standing section—we documented the rationale and tested it before committing.

Design Solutions

The crisis: when 100% of users failed

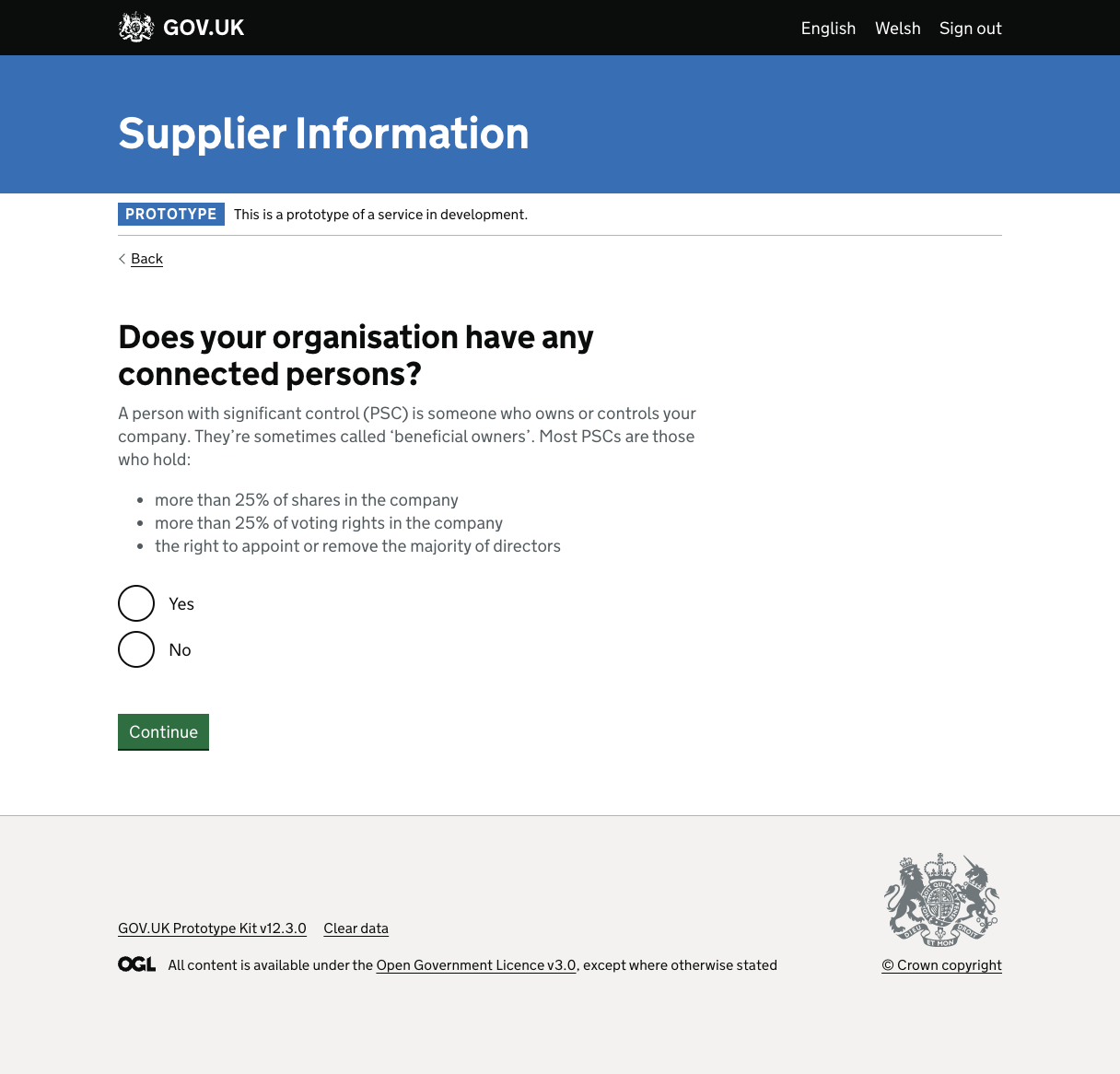

The most critical part of the service was a section called Connected Persons: the declaration of corporate relationships, ownership structures, and associated individuals relevant to the supplier entity.

In the first round of user research, every single user struggled with this section. Not some. Not most. Every user experienced difficulty of some kind: confusion about what was being asked, uncertainty about how to proceed, errors in their responses.

This is not a data point you can attribute to user error. When 100% of users fail, the design has failed. Full stop.

The problem was structural. The Connected Persons section required users to map relationships between legal entities and individuals, specifying who owns what, who controls what, and who is associated with whom, using an interface that presented this as a sequence of independent questions. But the relationships aren’t independent. They’re a network. Presenting a network as a sequence created a fundamental mismatch between the mental model and the interface.

We mapped the conditional logic that determined which paths users should take, then redesigned the journey to give users a clearer picture of what they were building before asking them to build it. We introduced hint text and a smaller set of contextual options instead of nine opaque choices upfront.

Subsequent research showed the failure rate dropping significantly with each iteration.

Breaking convention: full disclosure

One of the most instructive decisions on the project involved a section called Financial and Economic Standing, where suppliers declare the possibility of prior audit findings and upload supporting evidence.

The initial approach was conventional: one thing per page, staged disclosure, three to five boolean questions depending on responses, file upload, check your answers. This is exactly what the design system was designed for.

Research showed it wasn’t working. The questions were structurally similar, different enough to require individual answers, but similar enough that users were losing their orientation. They couldn’t see where they were going, which made it harder to answer where they currently were. Completion rates and confidence both suffered.

We made a deliberate decision to break the pattern. Instead of staged disclosure, we showed all the questions simultaneously, full disclosure, everything visible at once, so users could see the complete scope of what they were being asked before committing to any answer.

This required justification. Breaking from a design system convention isn’t a small decision. We documented the research finding, articulated the alternative approach, stated the principle behind it (users needed the full picture to answer confidently), and tested it. The second research round confirmed improved understanding and task completion.

The craft: where detail becomes design

Three smaller decisions, each of which illustrates why the details are not small.

Hint text. Form fields are not self-describing. The role of hint text is to close the gap between designer intent and user understanding: not to explain what a field is for in abstract, but to answer the specific question a user is likely to be asking at that moment. On this service, hint text was treated as a design decision, not a copy task. Each piece of contextual guidance was written against a specific user need identified in research.

Address replication. Suppliers frequently needed to provide the same address for multiple entities: registered address, trading address, associated individuals’ addresses. We introduced an address replication feature: if a user had already provided an address, they could select it rather than re-enter it. Small change, significant friction reduction, measurable improvement in accuracy.

Postcode search. The UK address entry journey was improved with postcode lookup: enter a postcode, select from matched addresses, confirm or edit. The implementation details mattered: how the results are presented, how edge cases (partial postcodes, no results, overseas addresses) are handled. We treated each of these as a distinct design problem, not an implementation detail. The solution met AAA accessibility standards.

Aligning with policy

One of the more unusual challenges was designing pattern choices that could satisfy both user needs and policy requirements simultaneously. For certain sections, the policy intent prescribed a specific type of interaction. The design work wasn’t to override these requirements but to implement them in a way that users could actually follow. Sometimes that meant accepting a design that wasn’t ideal from a pure UX perspective, documenting why, and focusing effort on the elements that were within scope to improve.

Outcomes and Metrics

A service that started with 100% user failure on its most critical journey was iteratively improved through documented, research-led design decisions to a point where users could complete their compliance declarations with confidence.

Resolved 100% user failure on Connected Persons. The failure rate dropped significantly with each iteration after we restructured the journey to match the relational mental model.

Iterative improvement to confidence and completion. Full disclosure in Financial and Economic Standing improved understanding and task completion in the second research round.

Nine published design histories. The design histories for this project remain published and accessible. They show the full arc: from orientation through crisis through craft through delivery.

Related reading

- Dealing with complexity — Connected Persons iteration and the conditional logic model

- Full disclosure — When standard patterns break; designing outside convention with justification

- Find and select a UK address — Postcode search UX with AAA accessibility