Building a design capability, not just a product

Individual designers produce work. Design teams produce capability. This is a story about the latter.

Problem Statement

Four UCD teams. Ten to twelve practitioners each. Five live digital services running concurrently. Four years.

At this scale, the work stops being primarily about individual design decisions and starts being about the systems within which decisions get made. Who makes them, how they get validated, how they get documented, how they get communicated across teams that may never be in the same room.

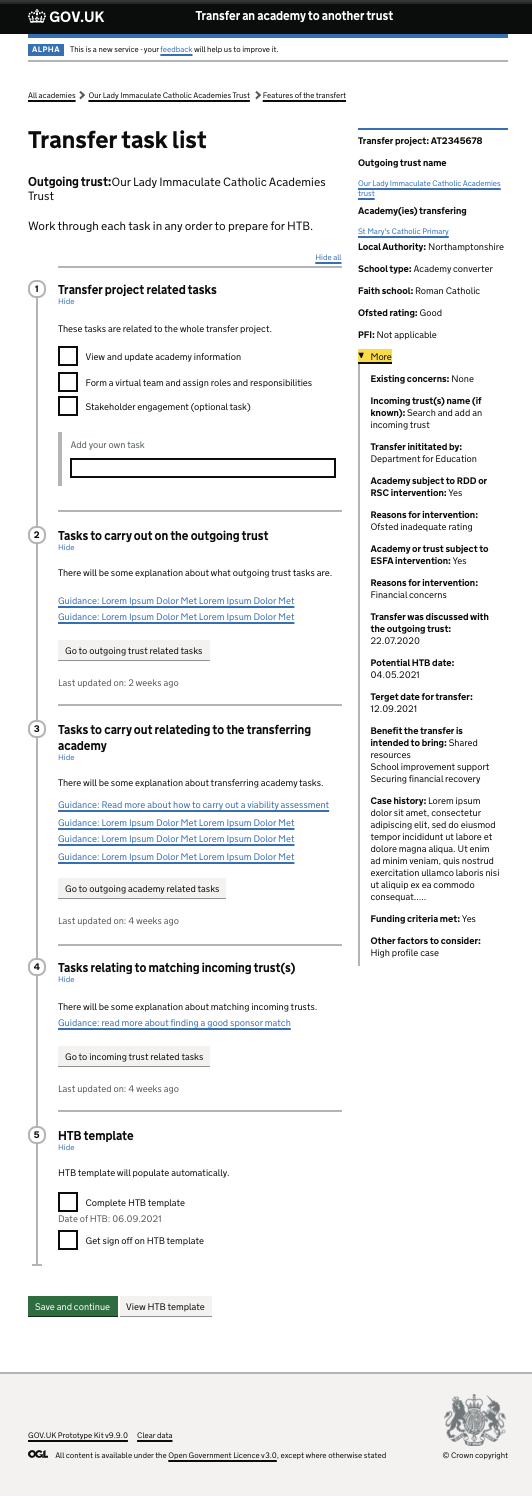

The five services were distinct in purpose but connected in context, all serving different parts of the same policy landscape, all under the same governance framework, all needing to meet the same standards. They ranged from external-facing applications used by schools and trusts, to internal tools used by staff managing complex casework, to hybrid services spanning both.

When I joined the programme, teams were doing good design work. But they were doing it independently. Decisions made on one service weren’t visible to the team working on an adjacent problem. Research findings weren’t being shared. Patterns being developed in one place were being re-invented elsewhere. And when team members left (as they do on long-running programmes), the knowledge they held left with them.

This isn’t a failure of the designers. It’s a failure of infrastructure. And it’s a design problem.

Your Role and Process

The approach was to build infrastructure, not just deliver features. Design histories for documenting decisions. A CMS integration so anyone could publish without knowing the underlying technology. A shared Figma library for components and patterns. Cycle-based ways of working combining elements of Shape-Up and Scrum.

Accessibility was treated as systematic practice, not a one-off audit: sustained identification, prioritisation, and resolution of issues across the live service. Each issue was documented, assessed for impact, designed against, implemented, and verified.

Design Solutions

Design histories and CMS

The most important thing we built was a system for documenting design decisions: not just outcomes, but decisions. Why we tried something. What the research said. What we rejected and why. What the constraints were.

This practice, design histories, had been developed by another team elsewhere in the organisation. We adopted it, adapted it for our context, and embedded it across all five services.

But adoption required more than advocacy. Many designers on the teams couldn’t efficiently use the tooling that existed. So we built a CMS integration: a content management interface layered on top of the publishing system, that let anyone on the team write and publish a design history without needing to know how the underlying technology worked.

The result was a practice, not a product. Over time, the histories became the institutional memory of the programme. New team members could read the history of a service and understand why it looked the way it did. Stakeholders could see design thinking, not just design outputs.

Figma component library

Alongside the documentation practice, we built a shared Figma library: a set of components and patterns that could be used across all five services as a starting point for iteration.

The goal wasn’t consistency for its own sake. It was speed and quality: so that a designer starting a new feature didn’t spend the first week recreating components that already existed, and so that when components did need to evolve, the change could propagate across the programme rather than diverging. The library also created a shared vocabulary—designers from different teams could reference a component by name and know they were talking about the same thing.

Research-led decisions at the detail level

Two examples of the kind of decision-making the programme produced.

Benefits and risks. One service asked delivery officers to record the potential risks and negative factors affecting an academy transfer. The section was labelled “Benefits and other factors.” In user interviews, officers consistently described the content as risks. The label didn’t match their mental model. We renamed the section “Benefits and risks.” A two-word change. The confidence with which users could complete the section improved noticeably in the next research round.

Meaningful identifiers. The same service required a system for identifying individual transfer projects. We designed a reference number system based on region, transfer type, and sequence. We tested it. Users found it functional but slightly opaque. In a subsequent round, we tested an alternative: using the outgoing trust’s name as the primary identifier. Users were clear which they preferred. We updated the design accordingly.

Neither of these decisions was dramatic. Both required observation, testing, and willingness to change based on what we found. Across five services and four years, this is how design quality compounds.

Accessibility as systematic practice

One of the services underwent a structured accessibility remediation programme. Not an audit conducted once at the end, but a sustained process of identifying, prioritising, and resolving issues across the live service.

The issues ranged from structural problems (missing page titles, inadequate error notifications) to interaction-level failures (upload components that didn’t communicate state to assistive technology, links that duplicated adjacent text without meaningful distinction). Each issue was documented, assessed for impact, designed against, implemented, and verified.

Outcomes and Metrics

Design histories embedded. By the end of four years, the design histories system was embedded across the programme and being actively maintained.

Figma library in use. The shared component library was in use across all five services.

New designers productive quickly. The strongest signal that the infrastructure worked: when new designers joined the programme, they could become productive quickly, not because they were exceptional, but because the knowledge was accessible. The design was in the history, not in someone’s head.

Accessibility remediation completed. Systematic work across the live service; accessibility treated as a first-class design concern rather than a compliance checkpoint.

Ways of working outlasted turnover. The cycle-based approach had outlasted several team membership changes.

Related reading

- Design histories — What they are, why we use them, and why we built a CMS

- Vertical slice — Building an academy transfers prototype in a cycle

- Collaboration across disciplines — The model, research, pair programming, and CMS